...

Description

Introduction

In this project website you'll find code, talks, and papers for a hierarchical probabilistic model for ordinal matrix factorization.

There are two algorithms used for inference, one based on Gibbs sampling and one based on variational Bayes. These algorithms may be implemented in the factorization of very large matrices with missing entries.

Ordinal matrix factorization in a nutshell

|

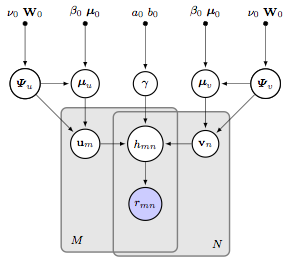

A hierarchical model for factorizing an M-by-N matrix with (sparse) ordinal entries r. u and v represent the latent factors while h gives the "hidden" dot product. Hierarchical Gaussian priors are placed on u and v. |

|

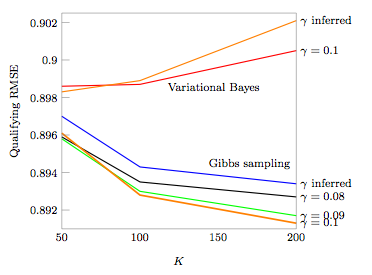

The Netflix data set consists of around 100 million ratings. The figure shows the root mean-squared error (RMSE) scores for the Netflix Prize competition. K represents the dimensionality of u and v. For K=200, the model contains around 100 million random variables. Despite the large number of ratings and model parameters, Gibbs sampling outperforms variational Bayes. |

...

Talks and Videos

Large-scale Bayesian Inference for Collaborative Filtering

video | slidesNIPS Workshop on Approximate Bayesian Inference in Continuous/Hybrid Models, 2007.

...

Software

Matlab package

zipAdditional code and data available upon request.

...

Publications

Large-scale Ordinal Collaborative Filtering

pdf1st Workshop on Mining the Future Internet, Future Internet Symposium, Berlin, September 2010.

A hierarchical model for ordinal matrix factorization

pdfStatistics and Computing, vol. 21, 2011